Every developer tool in your stack was built with the same unstated assumption: a human is operating it. A human who can scan a terminal, visually parse a CI dashboard, recognize when a config file isn’t being read, and intuitively distinguish “no output” from “silent failure.”

Coding agents share none of these abilities. And the tools don’t know that.

A Self-Hosted Runner Changes the Economics, Not Just the Bill

GitHub-hosted runners bill per minute. For a solo operation running CI across six repositories — build, test, coverage, Playwright visual regression, security scanning with gitleaks and semgrep — the minutes accumulate. But the cost problem isn’t the interesting one.

The interesting problem is what happens on the agent’s side while CI runs. A coding agent waiting for a GitHub-hosted runner is burning tokens against its context window — and it can’t predict when the job will start because shared runner queue times are nondeterministic. The agent either polls too early (wasting context on “still queued” responses) or waits too long (wasting the human’s wall-clock time).

A containerized self-hosted runner changes this equation. The runner image pre-bakes the entire toolchain — .NET SDK, Node.js, Playwright, Docker CLI — so there’s no per-job setup overhead. What took 2-3 minutes of “installing dependencies” on a hosted runner takes zero. The queue is local, so the agent’s polling delay becomes predictable.

The design is deliberately portable. docker compose up -d on a laptop, a VPS, or any Docker host. The workflow templates reference ${{ vars.RUNNER_LABEL || 'ubuntu-latest' }} — set the variable on a repo to route heavy jobs to the local runner, unset it to revert. Each repo opts in independently, and the fallback is always GitHub-hosted. No big bang migration.

But the real shift isn’t operational — it’s behavioral. When CI is free and fast, you can instruct agents to run the full quality gate before creating a PR. Failure detection moves left by an entire cycle. The agent catches the broken test before the PR exists, not after a reviewer flags it. That’s a different feedback loop — and it only becomes practical when CI stops being a metered resource.

Silent Failures: When the Error Signal Is the Absence of Signal

A YAML parse error in a GitHub Actions workflow produces zero runs. Not a failed run. Not an error in the logs. Nothing. total_count: 0.

For a human, this is confusing but recoverable — you go to the Actions tab, notice nothing ran, squint at the YAML, find the typo. For a coding agent, this is a diagnostic dead-end. The agent’s natural verification loop after pushing a workflow change is: find the triggered run, check its status, read the logs if it failed. When the YAML doesn’t parse, that loop returns nothing at every step. The agent concludes the workflow hasn’t triggered yet and waits. Or it concludes something unrelated is wrong and starts investigating a phantom problem.

The fix is encoding an alternative diagnostic path into the agent’s post-push logic: gh workflow run --field dry_run=true, which returns the parse error directly from the API. But the agent won’t discover this on its own. The error signal is the absence of signal — and agents don’t reason well about things that should exist but don’t.

This generalizes beyond GitHub Actions. Any system where a configuration error produces silence instead of a failure message is a trap for agents. Agents are trained on patterns where errors are explicit. When the system’s failure mode is “nothing happens,” the agent’s training data has no matching pattern to draw from.

Wrong Config File, Right Intention

An agent tasked with modifying a container environment will reach for devcontainer.json. It’s the standard. It’s well-documented. It’s what the training data says to edit.

But if the actual container lifecycle is controlled by a custom shell script — one that bypasses VS Code entirely and launches the container with docker run -it — that edit does nothing. The agent confidently modifies a file that no process reads. The change “succeeds” (the file is valid, the commit is clean, the PR looks reasonable), but the runtime behavior doesn’t change. And because nothing breaks, there’s no error to trigger a retry or investigation.

This isn’t a bug in the agent. It’s an inherited assumption. The agent’s training data overwhelmingly associates “container configuration” with devcontainer.json because that’s what the ecosystem standardized on. Any non-standard runtime entry point — a custom CLI wrapper, a Makefile-driven build, a shell script that replaces docker-compose — is invisible to the agent unless you surface it explicitly.

The fix isn’t documenting every tool in the agent’s prompt. It’s building a discovery step into the agent’s workflow: how does this actually start? before what should I change? The agent needs to verify which config file is load-bearing before modifying any of them. This is obvious when you say it out loud. It’s consistently missed in practice because the human operator already knows the answer and forgets the agent doesn’t.

What Surprises the Agent Itself

Here’s something I find worth sharing: when I asked the coding agent which of these patterns it would struggle with most — not hypothetically, but based on how it actually processes information — the answer wasn’t the verbose output or the streaming pollution. Those are quantitative problems. The agent can be told “filter this” or “don’t use –watch” and it complies.

The two it flagged as structurally hard: the absence-of-signal failure and the wrong config file.

For the silent YAML failure, the agent’s reasoning is straightforward. Its training data overwhelmingly associates errors with explicit signals — exceptions, exit codes, log lines, HTTP status codes. A system that fails by producing nothing has no pattern to match against. The agent can’t self-correct because the feedback loop is missing entirely. It’s not that the agent reasons poorly about the problem — it’s that the problem never surfaces as something to reason about.

For the devcontainer.json trap, it’s worse. The agent would reach for that file with high confidence. The edit would succeed. The commit would be clean. The PR would look reasonable. And nothing would change at runtime. Because nothing breaks, the agent never learns the edit was meaningless. It would move on, satisfied, having produced a technically valid change with zero operational effect. The only way to prevent this is to inject the discovery step from outside — the agent won’t generate it on its own because it doesn’t know what it doesn’t know.

This isn’t an argument against using agents. It’s a map of where human oversight is structurally necessary — not because the agent is careless, but because certain failure modes are invisible to any system that relies on explicit feedback to learn.

The Audit Question

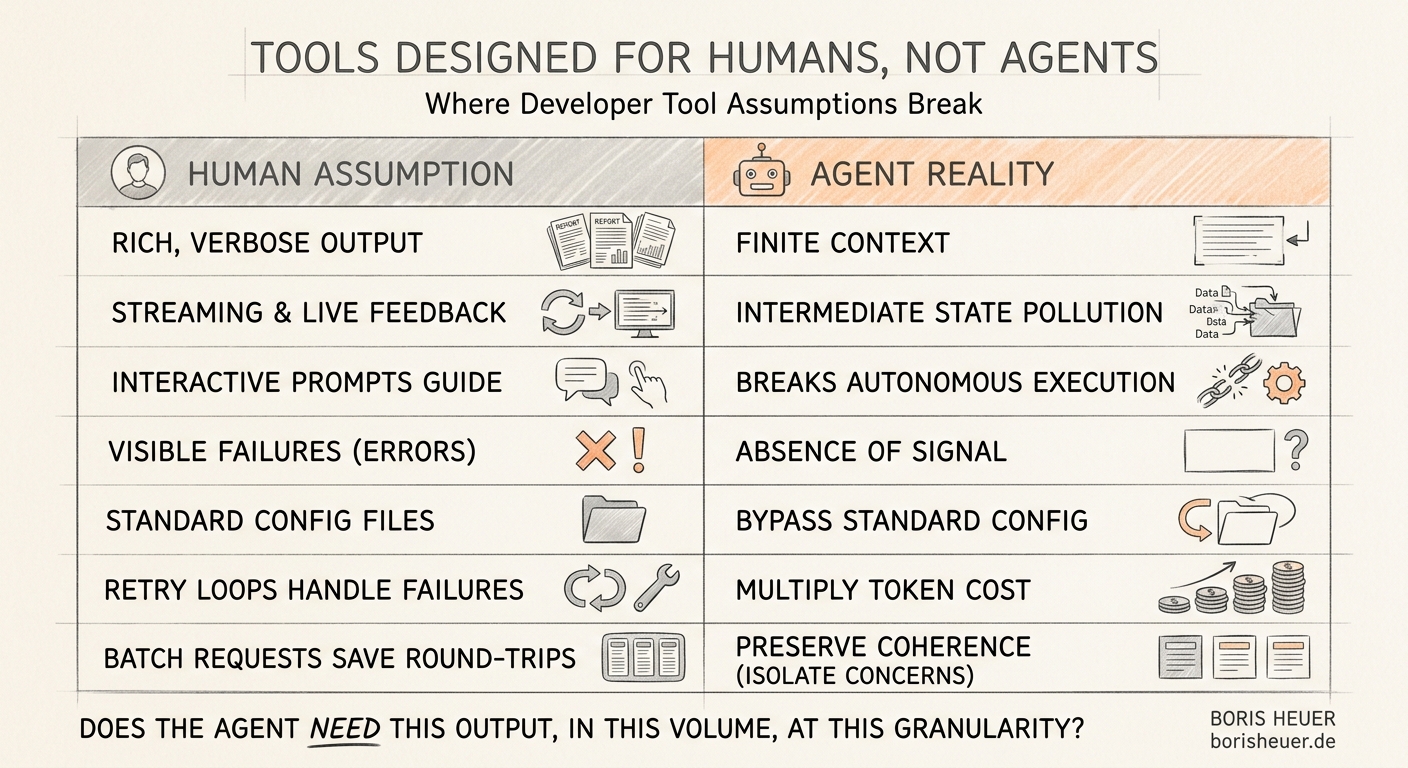

Every tool in a developer stack encodes assumptions about its consumer. Most of these assumptions are invisible because they’ve been true for the tool’s entire existence. Here are the ones I keep running into:

The optimization strategy for an agentic harness isn’t “make things faster.” It’s asking one question about every tool interaction: does the agent actually need this output, in this volume, at this granularity?

Most of the time, the answer is no. The tool is delivering a human-quality experience to a consumer that needs machine-quality precision. Less output, fewer fields, one result instead of a stream, and explicit failure signals instead of visual cues.

If you’re running coding agents against real repositories and real CI pipelines, this audit is worth doing systematically. Not because any single tool interaction is expensive — but because they compound. A verbose build log here, a streaming status check there, an unbounded file read somewhere else — and the agent hits 60% context utilization before it’s halfway through the task. After that, coherence degrades and the human has to intervene.

The tools aren’t broken. They were designed for a consumer that no longer matches.

withdocker run -it — that edit does nothing. The agent confidently modifies a file that no process reads. The change “succeeds” (the file is valid, the commit is clean, the PR looks reasonable), but the runtime behavior doesn’t change. And because nothing breaks